"Give me a child and I'll shape him into anything" - B. F. Skinner

Who is B.F Skinner?

Burrhus Frederic "B. F." Skinner (March 20, 1904 – August 18, 1990) was an American psychologist, behaviorist, author, inventor, and social philosopher. He was the Edgar Pierce Professor of Psychology at Harvard University from 1958 until his retirement in 1974.

He innovated his own philosophy of science called radical behaviorism, and founded his own school of experimental research psychology—the experimental analysis of behavior. His analysis of human behavior culminated in his work Verbal Behavior, as well as his philosophical manifesto Walden Two, both of which have recently seen enormous increase in interest experimentally and in applied settings. Contemporary academia considers Skinner a pioneer of modern behaviorism along with John B. Watson and Ivan Pavlov.

Skinner and Operant Conditioning

Operant conditioning is a type of learning in which an individual's behavior is modified by its consequences; the behavior may change in form, frequency, or strength. B. F. Skinner coined the term “operant conditioning” in 1937. The word operant refers to, "an item of behavior that is initially spontaneous, rather than a response to a prior stimulus, but whose consequences may reinforce or inhibit recurrence of that behavior".

Operant conditioning is distinguished from classical conditioning in that operant conditioning deals with the modification of "voluntary behavior" or operant behavior. Operant behavior operates on the environment and is maintained by its consequences, while classical conditioning deals with the conditioning of reflexive (reflex) behaviors which are elicited by antecedent conditions. Behaviors conditioned via a classical conditioning procedure are not maintained by consequences.

Burrhus Frederic "B. F." Skinner (March 20, 1904 – August 18, 1990) was an American psychologist, behaviorist, author, inventor, and social philosopher. He was the Edgar Pierce Professor of Psychology at Harvard University from 1958 until his retirement in 1974.

He innovated his own philosophy of science called radical behaviorism, and founded his own school of experimental research psychology—the experimental analysis of behavior. His analysis of human behavior culminated in his work Verbal Behavior, as well as his philosophical manifesto Walden Two, both of which have recently seen enormous increase in interest experimentally and in applied settings. Contemporary academia considers Skinner a pioneer of modern behaviorism along with John B. Watson and Ivan Pavlov.

Skinner and Operant Conditioning

Operant conditioning is a type of learning in which an individual's behavior is modified by its consequences; the behavior may change in form, frequency, or strength. B. F. Skinner coined the term “operant conditioning” in 1937. The word operant refers to, "an item of behavior that is initially spontaneous, rather than a response to a prior stimulus, but whose consequences may reinforce or inhibit recurrence of that behavior".

Operant conditioning is distinguished from classical conditioning in that operant conditioning deals with the modification of "voluntary behavior" or operant behavior. Operant behavior operates on the environment and is maintained by its consequences, while classical conditioning deals with the conditioning of reflexive (reflex) behaviors which are elicited by antecedent conditions. Behaviors conditioned via a classical conditioning procedure are not maintained by consequences.

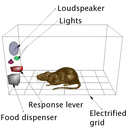

An example of a "Skinner box"

An example of a "Skinner box"

Skinner's main tool to research operant conditioning involved the use of a operant conditioning chamber (a.k.a. Skinner box). The design of Skinner boxes can vary depending upon the type of animal and the experimental variables. The box is a chamber that includes at least one lever, bar, or key that the animal can manipulate. When the lever is pressed, food, water, or some other type of reinforcement might be dispensed. Other stimuli can also be presented including lights, sounds, and images. In some instances, the floor of the chamber may be electrified. A subject was placed in the box, and the mechanism gave small amounts of food each time the subject performed a particular action, such as depressing a lever or pecking a disk. With the operant conditioning chamber attached to a recording device, Skinner was able to discover schedules of reinforcement. These patterns are the basis for organismic interactions with the environment and are explored extensively in Schedules of Reinforcement and elsewhere.

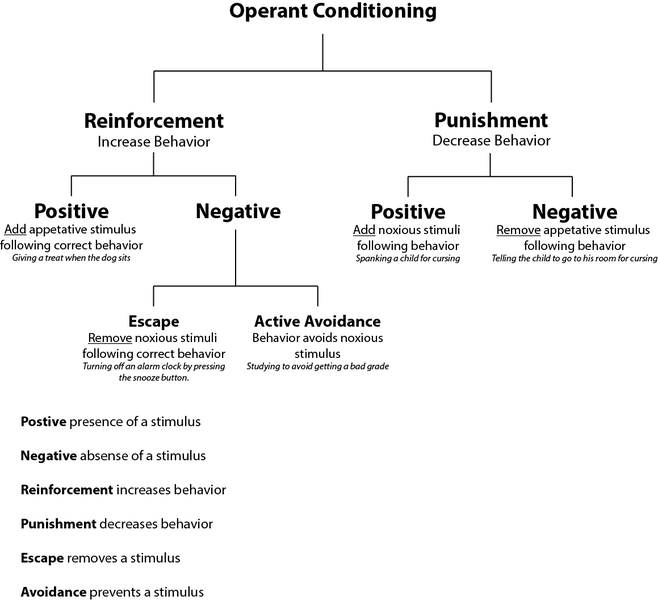

Five procedures were tested using the Skinner box. They are defined by the presentation or removal of a reinforcement or punishment. (Please note: Here the terms positive and negative are not used in their popular sense, but rather: positive refers to addition, and negative refers to subtraction.) The procedures are:

Using the Skinner box and the five procedures above, Skinner developed two important techniques for conditioning called shaping through successive approximation (or more often and more simply referred to as "shaping") and backwards chaining.

Below is a diagram which summarizes Skinner's findings as related to reinforcement and punishment (including examples).

- Positive reinforcement (Reinforcement): Occurs when a behavior (response) is followed by a stimulus that is appetitive or rewarding, increasing the frequency of that behavior. In the Skinner box experiment, a stimulus such as food or a sugar solution can be delivered when the rat engages in a target behavior, such as pressing a lever. This procedure is usually called simply reinforcement.

- Negative reinforcement (Escape): Occurs when a behavior (response) is followed by the removal of an aversive stimulus, thereby increasing that behavior's frequency. In the Skinner box experiment, negative reinforcement can be a loud noise continuously sounding inside the rat's cage until it engages in the target behavior, such as pressing a lever, upon which the loud noise is removed.

- Positive punishment (Punishment) (also called "Punishment by contingent stimulation"): Occurs when a behavior (response) is followed by a stimulus, such as introducing a shock or loud noise, resulting in a decrease in that behavior. Positive punishment is sometimes a confusing term, as it denotes the "addition" of a stimulus or increase in the intensity of a stimulus that is aversive (such as s an electric shock). This procedure is usually called simply punishment.

- Negative punishment (Penalty): Occurs when a behavior (response) is followed by the removal of a stimulus, such as taking away a child's toy following an undesired behavior, resulting in a decrease in that behavior.

- Extinction: Occurs when a behavior (response) that had previously been reinforced is no longer effective. For example, a rat is first given food many times for lever presses. Then, in "extinction", no food is given. Typically the rat continues to press more and more slowly and eventually stops, at which time lever pressing is said to be "extinguished."

Using the Skinner box and the five procedures above, Skinner developed two important techniques for conditioning called shaping through successive approximation (or more often and more simply referred to as "shaping") and backwards chaining.

- Shaping: Occurs by rewarding more and more exaggerated behavior, complex actions could be trained through small successive rewards. For example, if a pigeon turned its head left, some food is dropped. The next time the pigeon does that he has to turn a little more left to get the food. Eventually you can train the pigeon to turn all the way around in a circle. In a more contemporary example of shaping, a teacher may give children stars for improvement towards targeted behaviors, to help all children learn.

- Backward Chaining: Occurs when a complex series of behaviors can be created if taught "backwards". For example if the desired behavior was for a monkey to jump off a ledge, catch a rope, swing to a platform then climb to a banana, this could be taught sequentially. First the monkey would be placed on the platform, where it climbs to the banana. Then the monkey might be placed on the rope, where it learns it can swing to the platform, climb up and get the banana. Then it might be placed on the ledge, where it learns it can jump onto the rope. In this way behaviors can be "chained" together.

Below is a diagram which summarizes Skinner's findings as related to reinforcement and punishment (including examples).